What Is AI Safety and Why It Matters More Than Ever

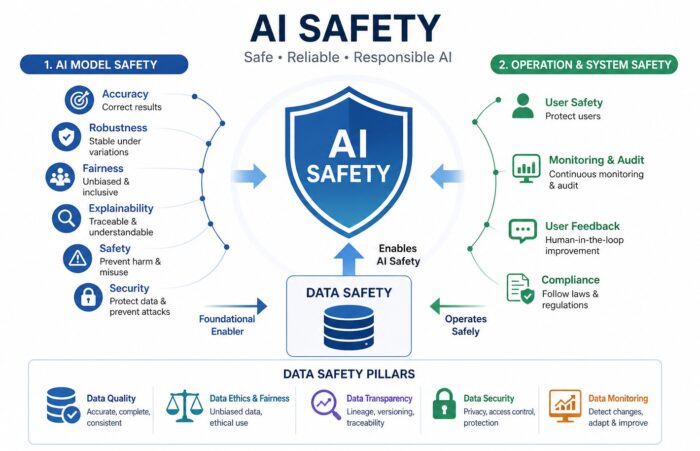

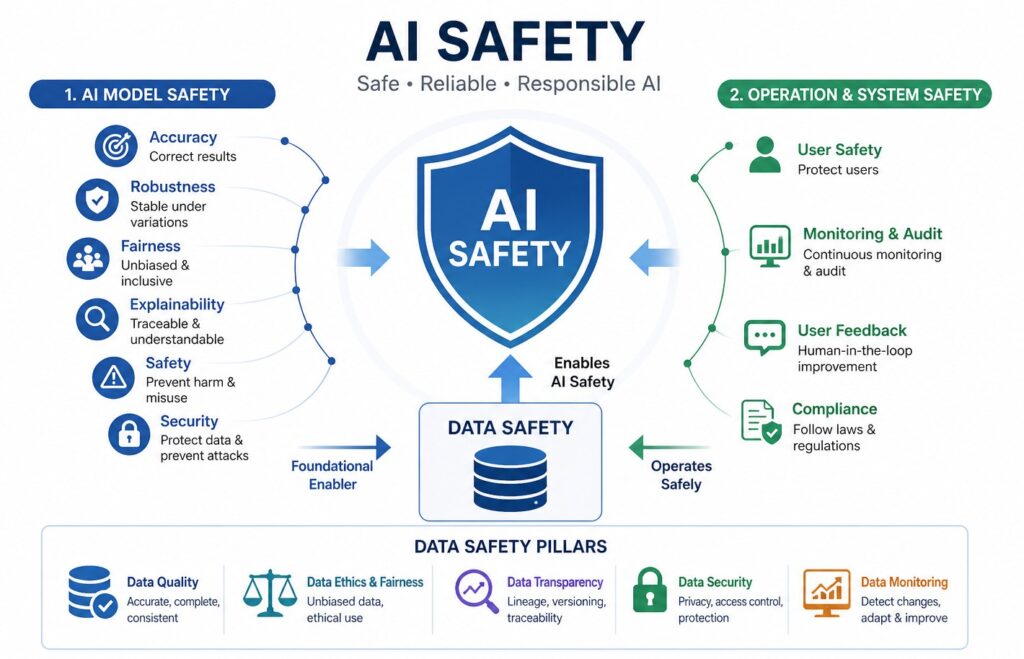

AI safety is not just a technical concept—it is a practical requirement for building systems that people can trust. At its core, AI safety means minimizing the risks of system failures, harmful outputs, or unintended behaviors, while ensuring that AI systems operate in ways that are reliable, beneficial, and aligned with human expectations.

This becomes especially important in high-impact industries such as healthcare, finance, and public services. A misdiagnosis from an AI-powered medical system or a biased credit scoring model can lead to real-world harm. AI safety can be broadly divided into two key areas:

- AI model safety, which focuses on the model itself

- AI system and operational safety, which focuses on how AI behaves in real-world environments

However, both areas share a common foundation: data.

Understanding AI Model Safety Through Six Core Dimensions

AI models are only as good—and as safe—as the data they are trained on. To evaluate AI model safety, we typically look at six key dimensions:

- Accuracy: Whether the model produces correct and reliable results

- Robustness: Whether the model remains stable under changing inputs

- Fairness: Whether the model avoids bias and discrimination

- Explainability: Whether decisions can be understood and traced

- Safety: Whether the model avoids harmful outputs

- Security: Whether the model and data are protected from threats

These dimensions are deeply interconnected. For example, poor data quality can reduce accuracy, increase bias, and weaken robustness all at once. This is why data safety plays a central role in enabling every aspect of AI safety.

AI System and Operational Safety in Real-World Environments

Beyond the model itself, AI systems must function safely within dynamic environments. This includes how AI interacts with users, how it is monitored, and how it complies with regulations. Key aspects of AI system safety include:

- User Safety: Preventing harmful or misleading outputs

- Monitoring and Audit: Tracking decisions and system behavior

- User Feedback: Incorporating human oversight and correction

- Compliance: Meeting legal and regulatory requirements

For example, the European Commission has introduced the AI Act, which emphasizes transparency, accountability, and risk management for AI systems. This reflects a global shift toward more structured AI governance.

1) AI Safety & Data Safety: Why Data Is the Deciding Factor

Data Safety Is Not Optional—It Is Foundational

As AI adoption accelerates, one truth becomes clear: AI systems are entirely dependent on data. They learn from data, make decisions based on data, and evolve as data changes. This means AI safety is ultimately determined by how data is collected, validated, managed, and protected.

To better understand this relationship, let’s explore how data safety operates across each dimension of AI safety.

1.1) AI Accuracy

Accuracy starts with data. If inaccurate or incomplete data enters the system, the model cannot produce reliable results. Data safety ensures accuracy by enforcing a structured and continuous validation process:

- Data ingestion with validation rules

- Detection of anomalies and outliers

- Enforcement of quality thresholds

- Continuous monitoring of data quality over time

This is not a one-time effort. Data evolves, and so must the systems that monitor it. Organizations that implement automated data quality monitoring often see measurable improvements in model performance and reliability.

1.2) AI Robustness

Robustness refers to the ability of AI systems to remain stable even when inputs change slightly. In real-world scenarios, data is rarely clean or predictable. To support robustness, data safety must:

- Include diverse and representative datasets

- Prevent overfitting to narrow data distributions

- Continuously detect and respond to data drift

For instance, platforms like Netflix constantly adapt their recommendation systems as user behavior evolves, ensuring consistent performance over time. The key idea here is simple: data safety enables adaptation, not just prevention.

1.3) AI Fairness

Fairness is one of the most visible—and sensitive—issues in AI. When data is biased, AI systems can produce discriminatory outcomes. To ensure fairness, data safety must go beyond basic quality checks and include:

- Balanced representation across key demographic attributes

- Continuous monitoring of fairness metrics

- Bias mitigation techniques such as reweighting and resampling

Common fairness metrics include:

- Demographic parity

- Equal opportunity

- Error rate balance

Fairness is not something you “fix once.” It requires continuous monitoring and governance.

1.4) AI Explainability

Explainability answers a critical question: Why did the AI make this decision?

Without proper data management, this question becomes impossible to answer. Data safety enables explainability through:

- Data lineage tracking (understanding where data comes from)

- Version control (tracking changes over time)

- Traceability (linking data to model outputs)

In regulated industries, explainability is often required. For example, the U.S. Food and Drug Administration mandates transparency in AI-driven medical systems.

1.5) AI Safety

AI systems must avoid generating harmful or dangerous outputs. Data safety plays a critical role by acting as a preemptive filter. Examples of data that must be controlled include:

- Violent or hateful content

- Illegal or unethical information

- Instructions that could cause harm

Modern AI systems combine automated filtering with human oversight to manage these risks effectively. Preventing harmful inputs is often more effective than correcting harmful outputs later.

1.6) AI Security

Security is one of the most critical aspects of AI safety. If data is compromised, the entire system is at risk. Data safety ensures security through:

- Encryption (both at rest and in transit)

- Role-based access control (RBAC)

- Audit logs and traceability

- Threat detection and response systems

2) Data Safety in AI Operations

AI systems operate in dynamic environments where data is constantly changing. This means data safety must function as an ongoing operational system, not a static control. Key components include:

- Real-time data monitoring

- Continuous audit and logging

- Integration of user feedback

- Regulatory compliance management

User feedback plays a particularly important role. It creates a cycle of improvement:

Better data → Better models → Safer outcomes → Increased trust

Companies like Google and Microsoft actively use feedback loops to refine AI performance and safety over time.

Conclusion

AI safety is not just about building better algorithms. It is about how data is collected, managed, controlled, and used across the entire lifecycle. Every key dimension of AI safety—accuracy, robustness, fairness, explainability, safety, and security—depends on data safety functioning correctly.

To build trustworthy AI systems, organizations must adopt a data-centric strategy that includes:

- Continuous data quality management

- Bias detection and ethical governance

- Transparent and traceable data systems

- Strong data security frameworks

- Real-time monitoring and adaptive responses

When these elements work together, data safety becomes more than a technical requirement—it becomes a strategic advantage.