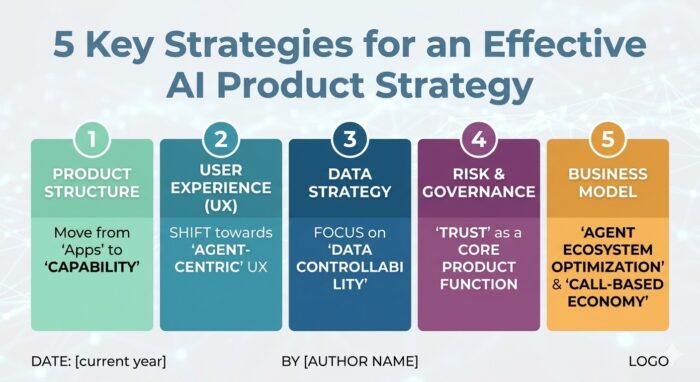

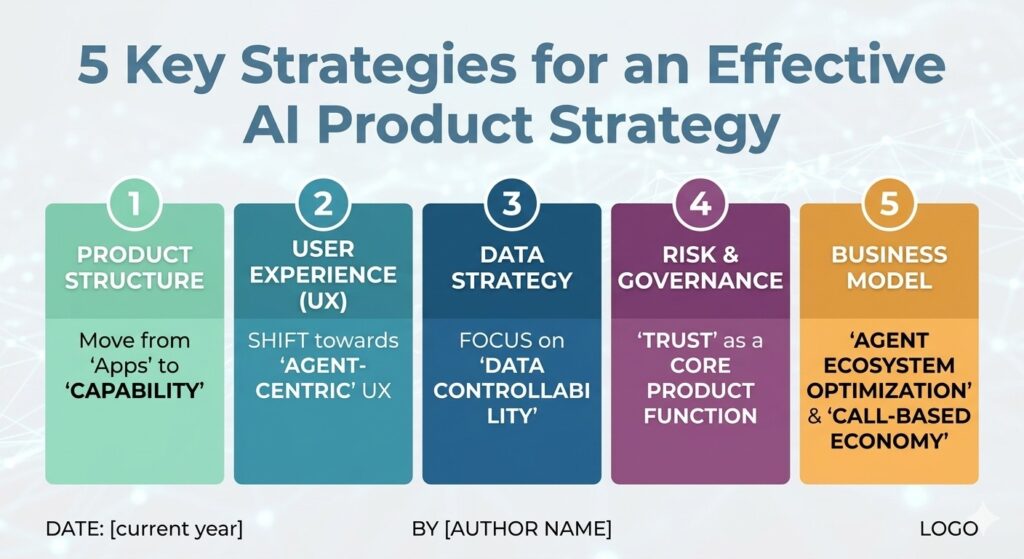

AI Product Strategy for the Next Computing Paradigm

AI agents are rapidly changing the way digital products are designed, used, and monetized. Every day, new AI agents are introduced for specialized domains such as software development, customer support, research, finance, healthcare, and productivity. At the same time, the concept of AI “Super Apps” is becoming more realistic, where a single AI agent can coordinate multiple services and complete complex workflows on behalf of users.

This shift means that simply adding AI functionality to an existing application is no longer enough. In the emerging AI ecosystem, products will increasingly compete based on whether AI agents can easily discover, understand, trust, and use them. Software is no longer evolving only for direct human interaction. It is increasingly being designed for autonomous AI systems that select and execute services dynamically.

As a result, AI agent-based products are fundamentally different from traditional software products in several important ways, including product architecture, user experience, data management, governance, and business models.

1. Product Architecture — From “Standalone Execution” to “Function Calling”

Traditional software products were designed around the assumption that users would directly open applications and manually interact with them. Users downloaded apps, clicked buttons, navigated menus, and completed workflows step by step. In this model, the application itself was the center of the experience.

AI agent environments change this structure completely. Instead of users directly interacting with multiple applications, AI agents increasingly perform tasks on behalf of users. The AI interprets a user’s intent, selects the necessary tools, and coordinates workflows automatically. This means products are no longer simply standalone applications. They are becoming collections of callable capabilities that AI systems can access when needed.

Because of this shift, modern AI-ready products must be designed with API-centric and modular architectures. Functions should be independently accessible, easy for AI systems to understand, and structured in a way that supports orchestration across multiple workflows.

This architectural transition is already becoming visible across the industry. Companies such as OpenAI and Anthropic are increasingly focusing on function calling, tool usage, and interoperable AI ecosystems. Anthropic’s Model Context Protocol (MCP), for example, was designed to help AI systems securely connect with external tools and services through standardized interfaces.

In the AI agent era, software products are no longer competing only on visual design or interface quality. They are increasingly competing on how effectively AI systems can integrate with them and execute their capabilities.

2. User Experience — From “Human-Centered UX” to UX Designed for Both Humans and AI

For decades, UX design focused almost entirely on human usability. The goal was to make applications intuitive, visually appealing, and easy to navigate. Designers optimized layouts, menus, workflows, and interactions to reduce friction for users.

While those principles remain important, AI agent ecosystems introduce a new requirement: products must also be understandable to AI systems. This creates the rise of AI-friendly UX.

In AI-driven environments, products need more than clean interfaces. AI agents must clearly understand what each function does, what inputs are required, what outputs are generated, and how workflows should be executed. Products that expose ambiguous structures or inconsistent workflows become difficult for AI systems to use reliably.

As a result, AI-ready products increasingly require structured APIs, standardized outputs, machine-readable documentation, semantic workflows, and clearly defined function descriptions.

For example, imagine an AI travel assistant booking flights, hotels, and transportation for a user. If travel platforms provide inconsistent pricing formats or unclear reservation workflows, the AI agent may fail to complete tasks accurately. On the other hand, platforms with standardized and AI-readable interfaces become much easier for AI agents to integrate into automated workflows.

This shift becomes even more important as conversational AI interfaces replace traditional app navigation. In the future, users may no longer manually browse dozens of applications and menus. Instead, they may simply describe their intent to an AI assistant, which will coordinate the required services automatically.

The future of UX is therefore becoming dual-layered. Products must remain intuitive for human users while simultaneously exposing structures that AI agents can easily understand and execute.

3. Data Management — From “More Data” to “Better Data Control”

In the early stages of AI adoption, many organizations believed that competitive advantage depended mainly on collecting as much data as possible. More data was often viewed as the key to building better AI models and improving personalization.

In AI agent ecosystems, however, the conversation is shifting from data quantity to data control. AI agents frequently operate across multiple applications, systems, and services simultaneously. As AI Super Apps evolve, a single AI assistant may gain access to financial systems, emails, healthcare services, enterprise workflows, shopping platforms, and personal schedules all at once.

This concentration of access creates significant risks related to privacy, security, and governance. Imagine a future AI assistant capable of reading emails, purchasing products, managing healthcare appointments, accessing enterprise systems, and handling financial transactions. If that agent is poorly governed or compromised, the potential impact could be enormous. Because of this, AI agent-based products require much stronger controls around data access and permissions. Companies must focus not only on collecting data, but also on governing how data is accessed, shared, and controlled.

This includes principles such as minimizing unnecessary data usage, separating sensitive information, implementing permission-based access controls, and providing users with greater transparency over how their information is being used.

Global regulations are already reflecting these concerns. The National Institute of Standards and Technology AI Risk Management Framework emphasizes transparency, governance, and accountability in AI systems. Similarly, the European Union AI Act places strong emphasis on human oversight, risk management, and responsible AI deployment.

In the future, users are likely to trust products not because they collect the most data, but because they manage data responsibly and transparently.

4. Risk and Governance — Understanding the Process Becomes Essential for Trust

Traditional software systems generally operated through predictable and predefined workflows. AI agents are fundamentally different because they can make autonomous or semi-autonomous decisions and perform actions on behalf of users. This creates a major challenge: trust.

As AI agents become more deeply integrated into business operations and daily life, users increasingly want to understand not only the outcome generated by AI systems, but also the reasoning process behind those outcomes.

For example, if an AI system rejects a financial transaction, modifies cloud infrastructure, recommends a healthcare action, or generates legal advice, organizations and users need visibility into how that decision was made. This is why explainability, traceability, and governance are becoming essential requirements for AI product development.

Future AI systems will likely require detailed audit logs, explainable reasoning paths, human approval mechanisms, policy enforcement systems, and intervention capabilities that allow users to correct or override AI decisions when necessary.

Trust in AI products will therefore depend not only on performance and accuracy, but also on transparency and controllability. Users want AI systems that they can understand, monitor, and intervene in when needed. In many cases, the ability to control or override AI behavior may become just as important as the automation itself.

5. Business Models — From Subscription Models to Usage-Based AI Economies

AI agents are also reshaping the economics of software products. Traditional software business models relied heavily on subscriptions, enterprise licensing, app purchases, advertising revenue, or seat-based pricing structures. Success was often measured by how many users installed or actively used an application.

In AI agent ecosystems, however, users may no longer consciously choose which applications they interact with. Instead, AI agents may dynamically select tools and services based on factors such as reliability, interoperability, cost efficiency, and workflow compatibility.

This fundamentally changes how software products compete. In the future, product success may depend less on direct user acquisition and more on how frequently AI agents choose to call a product’s services. As a result, usage-based monetization models are likely to become increasingly important. Companies may generate revenue through API consumption, task execution frequency, AI workflow usage, token-based interactions, or agent transaction volumes rather than traditional subscription models alone.

This trend is already visible across cloud computing and AI infrastructure markets, where consumption-based pricing models are becoming increasingly common. At the same time, interoperability becomes critically important. Products that remain isolated within closed ecosystems may struggle compared to products designed to integrate seamlessly across multiple AI agent environments.

This is one reason why open standards such as MCP are attracting significant attention within the AI industry. Products that AI agents can easily connect to, trust, and orchestrate may become far more valuable within future AI ecosystems.

In the AI agent era, being discoverable and usable by AI systems may become just as important as being discoverable by human users.

Conclusion

The rise of AI agents is fundamentally changing the nature of digital products. Historically, products were directly selected, opened, and operated by users. In the future, products may increasingly be selected, combined, and executed by AI systems acting on behalf of users. This means the future of product strategy is no longer simply about adding more features or creating visually attractive applications.

Instead, successful AI agent-based products will need to integrate seamlessly with AI ecosystems, provide AI-friendly architectures, support trustworthy governance structures, and offer transparent data management that keeps users in control.

Ultimately, the products that succeed in the AI agent era may not be the products with the largest number of features. They will likely be the products that AI systems can easily understand, trust, and integrate into autonomous workflows while still maintaining transparency, reliability, and human oversight.