Introduction

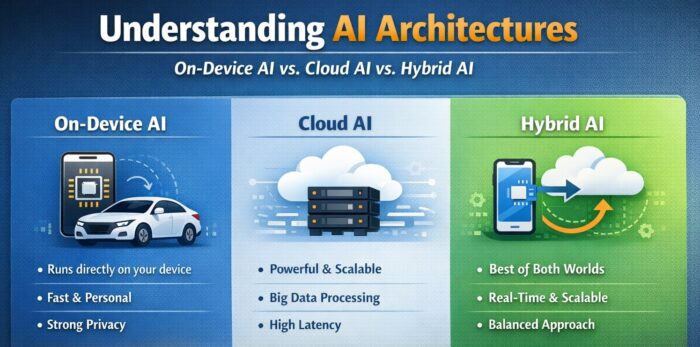

As artificial intelligence becomes deeply embedded in modern products and services, the conversation has clearly shifted. The question is no longer whether organizations should adopt AI, but rather where AI should run and how it should be architected to best support the service being delivered. The execution environment of AI models—whether on a user’s device, in the cloud, or across both—directly affects performance, cost efficiency, data governance, security, and overall user experience.

This architectural decision is especially critical for AI-driven services and AI agents that interact directly with users. Internal analytics or batch-based automation may function well in centralized cloud environments, but customer-facing services that demand real-time responsiveness or rely heavily on personal data require a more deliberate structural choice. In this article, we explore three dominant AI deployment architectures—On-device AI, Cloud AI, and Hybrid AI—while examining their strengths, limitations, and the key data management considerations behind each approach.

Why AI Architecture Matters More Than Ever

AI has matured beyond experimental use cases and is now a core component of digital products across industries. From recommendation engines and voice assistants to predictive analytics and autonomous systems, AI is expected to operate reliably, securely, and at scale. As expectations rise, architectural decisions play an increasingly central role in determining whether AI enhances or undermines a service.

Where AI runs determines how quickly decisions can be made, how much data must be transmitted, how exposed sensitive information becomes, and how easily systems can evolve over time. A poorly chosen architecture can lead to unnecessary latency, inflated cloud costs, regulatory risk, or brittle systems that struggle to scale. Conversely, a well-aligned architecture allows AI capabilities to feel seamless, responsive, and trustworthy to users.

On-Device AI: Maximizing Personalization and Real-Time Performance

On-device AI refers to an architectural approach in which AI models run directly on end-user devices rather than relying on remote cloud servers. Smartphones, laptops, vehicles, wearables, and IoT devices increasingly ship with dedicated AI acceleration hardware such as GPUs, NPUs, or specialized AI chips, making local inference feasible at scale.

The most significant advantage of on-device AI is its ability to deliver near-instant responses. Because data is processed locally, there is virtually no network latency, allowing applications to react immediately to user behavior or environmental changes. This is particularly valuable in scenarios such as speech recognition, real-time translation, camera enhancements, augmented reality, and context-aware recommendations, where even small delays can degrade user experience.

On-device AI also provides a strong privacy advantage. Since sensitive data does not need to leave the device, the risk associated with transmitting personal or regulated information is significantly reduced. This architectural choice aligns well with growing regulatory pressure around data protection, including GDPR and CCPA, and helps build user trust in AI-powered features.

However, on-device AI comes with clear constraints. Devices have limited computational resources, memory, and battery life compared to cloud infrastructure. As a result, running large-scale models or continuously retraining them on the device is typically impractical. In most real-world implementations, on-device AI focuses on inference rather than training, with models being trained elsewhere and deployed in optimized form to devices. This limitation also makes data standardization and quality control especially important, as device-level models depend on clean, predictable input to function reliably.

Cloud AI: Centralized Power for Scale and Learning

Cloud AI remains the most widely adopted AI architecture, particularly for enterprise applications and data-intensive workloads. In this model, AI systems run on centralized cloud infrastructure that offers virtually unlimited compute, storage, and scalability. This environment is ideal for training complex models, processing massive datasets, and continuously improving AI performance over time.

One of the strongest advantages of cloud AI is its ability to support large-scale learning. Training advanced machine learning and deep learning models often requires distributed computing resources that far exceed the capabilities of individual devices. Cloud platforms make it possible to iterate rapidly, deploy updates efficiently, and manage models from a single control point.

Cloud AI also simplifies data governance and operational management. When data collection, preprocessing, model training, and deployment are centralized, organizations can enforce consistent policies, monitor performance more easily, and align AI initiatives across teams. This is why many chatbots, recommendation engines, fraud detection systems, and predictive analytics platforms are built primarily on cloud-based AI architectures.

Despite these strengths, cloud AI introduces trade-offs that cannot be ignored. Because data must travel from the device to the cloud and back, latency becomes an inherent challenge, especially for real-time or interactive services. Additionally, transmitting personal or sensitive data to centralized servers raises security and compliance concerns, requiring robust encryption, access control, and governance frameworks. Cloud AI also depends heavily on reliable network connectivity, which can limit its effectiveness in environments where connectivity is unstable or unavailable.

Hybrid AI: Balancing Speed, Privacy, and Scalability

Hybrid AI combines the strengths of on-device and cloud architectures by assigning different responsibilities to each environment. In this model, time-critical and privacy-sensitive tasks are handled locally on the device, while compute-intensive training and long-term analysis are performed in the cloud. Updated models are then periodically distributed back to devices.

This approach allows organizations to deliver fast, personalized experiences without sacrificing the ability to learn from large-scale data. For example, an AI service might process user interactions locally to provide immediate recommendations or voice recognition, while aggregated and anonymized data is sent to the cloud for deeper analysis and model improvement. By separating inference from training in this way, hybrid AI can meet demanding performance, privacy, and scalability requirements simultaneously.

However, hybrid AI also introduces additional complexity. Managing data flows between devices and cloud systems requires clear policies about what data is stored locally, what data is transmitted, and when synchronization occurs. Model lifecycle management becomes more intricate, as teams must coordinate training schedules, deployment strategies, and version compatibility across distributed environments. Without careful design, hybrid systems can become difficult to operate and maintain.

Choosing the Right AI Structure

Ultimately, the choice of AI architecture is not purely a technical decision. It is a strategic one that must align with service goals, user expectations, and data characteristics. Real-time interaction, personalization depth, regulatory constraints, and operational maturity all influence what architecture makes the most sense.

Services that prioritize immediacy and user context often benefit from on-device or hybrid approaches. Data-heavy analytical platforms may lean toward cloud AI for its scalability and centralized control. Many modern AI-powered products find their optimal balance in hybrid architectures, where responsibilities are clearly divided based on what each environment does best.

What matters most is recognizing that AI architecture and data management are inseparable. Decisions about where AI runs inevitably shape how data is collected, processed, protected, and governed. Treating these concerns as a unified design challenge leads to more resilient and sustainable AI systems.

Conclusion

As AI-driven services continue to mature, the era of relying on a single, uniform AI architecture is coming to an end. Competitive advantage increasingly comes from tailoring AI structures to the specific needs of each service and designing data flows with precision and intent.

There is no universally “correct” choice between on-device AI, cloud AI, and hybrid AI. Each architecture offers distinct strengths and trade-offs. The most successful organizations are those that understand their service context, evaluate their data landscape honestly, and choose an architecture that can evolve alongside their product.

In the AI era, performance is not defined solely by the sophistication of the model. It is defined by how intelligently that model is placed, how responsibly data is handled, and how well the entire system aligns with real-world service demands.